The unique challenges faced by fintech data teams

No matter what industry or vertical you’re in you’ll have a set of challenges that are unique and difficult

- If you’re in B2B you may have to worry about data being used for billing customers or not having enough data to run significant A/B tests

- If you work in B2C you have to handle many millions of users interacting with your product each day

- If you work in a marketplace you’re under pressure to create efficiencies and keeping data systems operational over weekends and holidays

However, one vertical have it harder than most; fintech data teams.

Fintech data teams have to balance critical data, regulatory pressure and fast growth. When data is wrong the stakes are high.

Fintech is the most invested industry worldwide with startups raising $21.5B globally in Q2 2022 and more than 50% going into $100m+ rounds. In other words, there has been a lot of growth in these companies.

As companies grow fast, so does the data team. The median data as % of workforce in fintech companies is 4% with an average data to engineer ratio of 0.25x. It’s not uncommon to see data teams exceeding 100 people in larger fintech scaleups.

What makes life in fintech data teams uniquely hard?

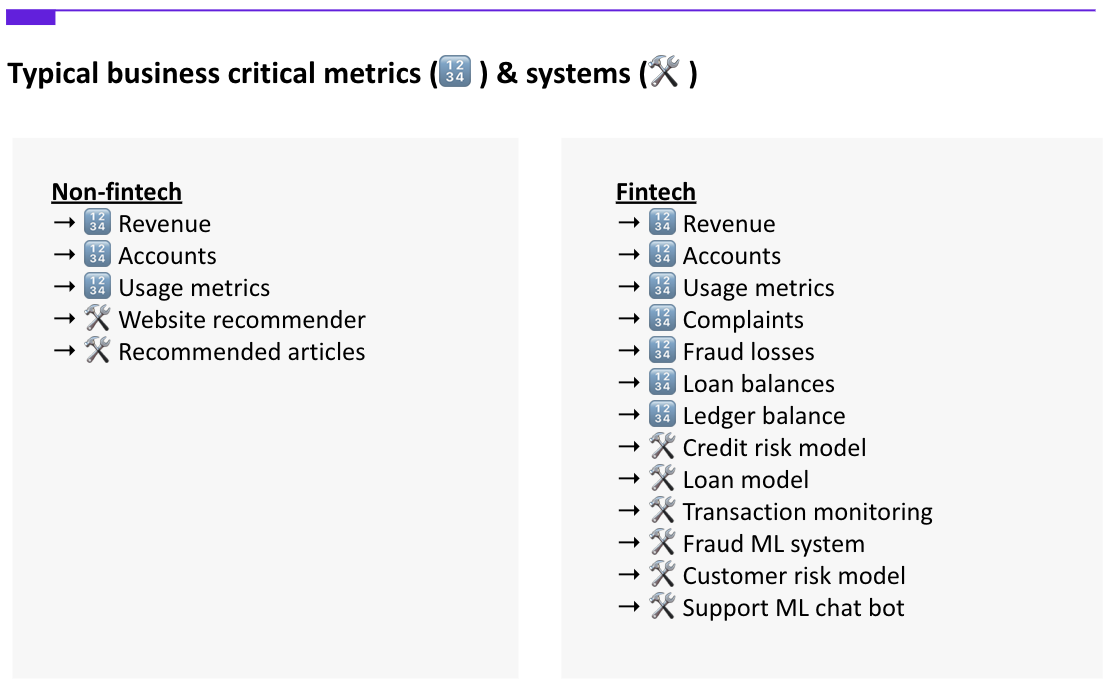

The stakes are just higher in fintech. If you compare a B2C and fintech company, there are typically an order of magnitude more critical metrics and systems to stay on top of.

If a recommender system doesn’t work as intended on an eCommerce website you may lose some revenue. If the transaction monitoring system in a startup bank is faulty, you’ll have fraudsters flocking to the platform, you’ll need to notify regulators and you may get a multi-million dollar fine.

The combination of many people contributing to the same code base of dbt models and dashboards and having dozens of business-critical use cases makes the challenge for fintech uniquely hard.

This is well illustrated in a typical dbt lineage. If you’ve got thousands of data assets, good luck picking out if anything business-critical is impacted from an upstream error. Let alone figuring out who caused the issue or who should be notified downstream.

A typical spaghetti mess of a lineage. Was something critical downstream impacted by an upstream error? Nobody knows This article has some recommendations for how to manage ownership in-and outside the data team for both embedded and centralised teams.

The unique challenges faced by fintech teams can be seen through different lenses.

Regulatory reporting

Almost all fintech companies are overseen by a regulator. In the UK it’s the Financial Conduct Authority (FCA) and the Prudential Regulation Authority (PRA). Regulators put a lot of scrutiny on fintechs which translates into challenging work for data teams. If companies get it wrong there are consequences and there’s a long list of companies being fined for lack of controls or providing wrong data.

“N26 fined €4.25m for weak money-laundering controls. German regulator rebukes the fast-growing online bank and has installed a supervisor to monitor progress” - Financial Times

Often, a fine is just the tip of the iceberg. The company afterwards has to invest in improving controls and in the worst case regulators can take actions such as preventing the company from onboarding new users.

Dealing with regulators is no easy task for data teams

- Definitions–deadlines are short and there’s little room for questions or interpretations around regulatory requests which means you need robust definitions of metrics and a log of previous requests

- Accuracy–there’s a high demand for data accuracy. While you may get away with having made a mistake in a data pull for a market sizing analysis, there’s less tolerance for errors when it comes to regulators. This puts pressure on the level of testing and monitoring required

- Reproducibility–data needs to be reproducible. If you’re asked to resubmit data from six months ago, you need to be able to get that to match up Often, regulatory requests come with short deadlines and the data team may have to drop everything else to jump on it. The combination of the high accuracy needed and time pressure can make these a strain on the data team.

Fraud and anti money laundering (AML)

Fintech companies almost always have some requirements when it comes to detecting money laundering on their platform and preventing customers from being the target of fraud. The data team is often involved as many of these problems have proven to be suitable applications for machine learning.

However, managing a machine learning system that decides if a customer should be allowed to sign up on the platform or is a potential fraudster and should be reported to the authorities comes with a big responsibility.

If your transaction monitoring system has dependencies on a data model that wasn’t updated for two days, you may end up approving transactions for customers that are outside your risk appetite. This may mean you have to notify regulators. As the number of machine learning systems and dependencies on data models grow, so does the number of critical dependencies and things that can go wrong.

Product analytics

In fintech you have to take a more cautious approach to A/B testing and experimentation. Not only do you have to track impact of launches on the key metric you’re optimising for but you also have to be able to report on health metrics. If you ship an experiment to make more customers solve their issues through self serve instead of getting in touch with customer support you have to ask yourself some questions first

- How does this impact other channels? Do people call in instead? What’s the implications of wait time and staffing levels and how does that match what we’ve committed to regulators?

- How is this perceived by customers? Are we seeing an increase in complaints? How about on our forums or social media. Is there any reputable risk?

- How do we convey to regulators that we can safely move on with releasing this to the entire user base?

Customer experience

Most companies are focused on the customer experience and may have set goals to improve the NPS or customer satisfaction score for support tickets. Fintechs have the same challenges but with more scrutiny. Among others, they’re required to report complaints to regulators (in the UK, FCA collects complaints data in a central database https://www.fca.org.uk/data/complaints-data).

Customer experience data often comes from various third party systems. This creates a challenge for data teams of having to extract data from third party or internal tools and at the same time guarantee the accuracy. Building data controls on data imported from third party sources, or in some cases spreadsheets, is challenging as the raw data is more prone to manual input errors. This article has some recommendations for steps you can take to make life easier when using spreadsheets as part of your data stack.

Data quality metrics

Where some companies may want to show data quality metrics to be able to demonstrate progress to stakeholders, fintechs may be required to report on downtime of a critical system. For example, if there’s a known issue on the machine learning model that determines if a new user is eligible to sign up, metrics around downtime and impacted users may have to be shared with regulators.

As data teams venture into owning production-grade systems, terms known from traditional software such as mean time to detect (MTTD) and mean time to resolve (MTTR) will be required to indicate the system’s health from the perspective of detecting/recovering production issues. However, most data teams and systems are not set up well to capture this.

Historical consistency of data

If you work in a B2C company and you can’t get data to match what you showed the CFO six months ago you may look slightly embarrassed. For fintechs this may have more severe consequences.

For example, if you submitted data to a regulator you need to be able to get that to match if you’re asked to resubmit it later on.

This can be a difficult task for data teams, as not only do you need to maintain a snapshot of key data, but you also need to know of all dependencies and their history to be able to recreate the state as it was. Building a testing suite to catch historical issues can be a daunting task even for the most sophisticated data teams.

.png)

.png)